Google hasn’t announced Gemini Omni. A reddit user just found it anyway.

Someone opened their Gemini app, got a pop-up for a model they’d never heard of, and started generating video. What came out has been making rounds on Reddit for the past few days, mostly because of one clip.

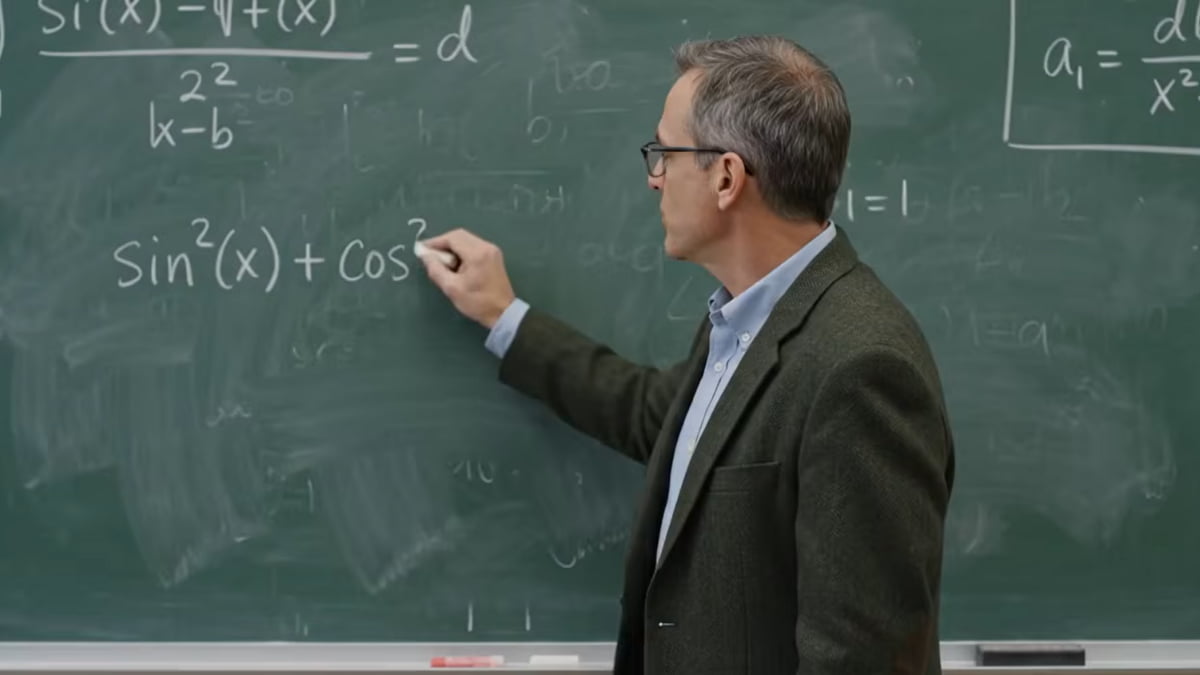

The chalkboard video is why.

A professor writing a full mathematical proof in chalk, narrating as he goes, text legible, delivery natural, physics mostly convincing. AI video has never handled text well. This one does. And it’s not just text, the audio, the movement, the realism are all hitting at a level that has people genuinely uncomfortable in a way the usual AI video demos don’t.

It’s a leak. Google hasn’t said a word. I/O is next week. But whatever Omni is, the early results suggest something shifted.

What we know about Omni

Not a lot, officially. The user got a pop-up in the Gemini app prompting them to “create with Gemini Omni”, described as a new video generation model with the ability to remix videos, edit directly in chat, and use templates. That’s the entire official description. Google hasn’t confirmed anything exists.

Max Weinbach dug into the metadata and found that Omni appears to be an extension of Veo rather than something built from scratch. Which tracks. Google has been developing Veo for a while and the output here looks like a significant step forward from what Veo was producing, not a completely different direction.

I/O is next week. That’s almost certainly when this becomes official and we get actual details on what Omni is and how it fits into the broader Gemini lineup.

Where it still lacks

The chalkboard result is impressive. The spaghetti test is a different one.

The original Will Smith prompt got blocked by Omni’s guardrails, so the user rewrote it, two men at a seaside restaurant, white tablecloth, approaching the table and eating spaghetti while having a conversation.

Spaghetti appears from nowhere on plates that were empty seconds earlier. The eating doesn’t match the bites. The inconsistencies that the chalkboard video mostly avoids stack up quickly here. Another Reddit user ran the same prompt through ByteDance’s Seedance 2 and got a noticeably more consistent result.

So Omni isn’t uniformly ahead of everything. The text handling is genuinely new. Physical realism on complex interactions still has the rough edges you’d find elsewhere.

You May Like: daVinci-MagiHuman Finally Makes Open-Source AI Video Feel Real

The usage question most ignore

Those two generations, the chalkboard and the spaghetti consumed 86% of this user’s daily quota on a Google AI Pro plan. There was some Gemini Flash usage the same day so it’s not a perfectly clean number, but the direction is clear. Video generation on Omni is expensive in terms of quota, and that’s going to be the conversation nobody is having right now but everyone will be having the moment Google makes this official and people hit their limit inside the first two prompts.

What comes next

Google said video is here to stay after OpenAI shut down Sora earlier this year. Omni looks like the proof of that commitment. The chalkboard result alone suggests something real has shifted on the text rendering problem, even if the model isn’t consistent across all prompts yet.

We’ll know the full picture next week. Until then the chalkboard video is the thing worth watching twice.