Every editor has that one clip. The background needs to go but something always looks off. Hair gets chopped. Edges look fake. You look up enterprise solutions and they want a full subscription for one use case and even then it is not guaranteed to work.

After Effects background removal is not straightforward. CapCut does an okay job until it doesn’t. And when it fails on hair or fast motion you are back to square one.

MatAnyone 2 is an open source video matting model that does not just detect where the person ends and the background begins. It checks every pixel in the cutout and fixes the ones that are wrong. Hair strands, moving fabric, fast motion & it handles the details that make other tools look amateur.

Is it a one stop solution for everything? No. But for an open source tool with this level of capability it is absolutely worth a look.

What makes MatAnyone 2 different

Most matting tools generate a cutout and hand it to you. Good or bad that is what you get.

MatAnyone 2 has a built in quality evaluator that scores its own output before you ever see it. It looks at every pixel in the matte and flags the regions it got wrong. Then it goes back and fixes them. Hair boundaries, semi-transparent fabric, fast motion blur — the evaluator specifically targets the edges that cause problems everywhere else.

It was also trained on VMReal, a dataset of 28,000 real world video clips and 2.4 million frames. Not studio footage. Real world conditions with inconsistent lighting, movement, and complex backgrounds. That training data is a big reason why it handles challenging footage better than models trained on cleaner controlled datasets.

The jump from MatAnyone 1 to MatAnyone 2 is visible in their own side by side comparisons. Edges that were soft and smeared in version 1 are clean and accurate in version 2. Not a small incremental improvement.

The quality evaluator that changes everything

This is the part that actually separates MatAnyone 2 from everything else.

Most matting models are trained to produce a good output. MatAnyone 2 is trained to know when its output is bad. There is a real difference.

The Matting Quality Evaluator looks at every pixel in the generated matte and produces a map that marks which regions are reliable and which are wrong. The model then uses that map to focus its corrections exactly where they are needed. Boundary regions, hair strands, semi-transparent areas, the places that always cause problems get extra attention automatically.

What I find genuinely clever about this is that it does not need a perfect dataset to learn from. It figures out quality on its own without requiring ground truth labels for every frame. That is how they were able to scale training to 28,000 real world clips instead of being stuck with small controlled studio datasets.

The difference shows up most on challenging footage. Moving hair, windy conditions, complex backgrounds. The places where other tools give up and leave you with a mess.

Also Read: I Thought ElevenLabs Was the Only Option Until I Found This Free Voice Cloning Tool

Try it right now

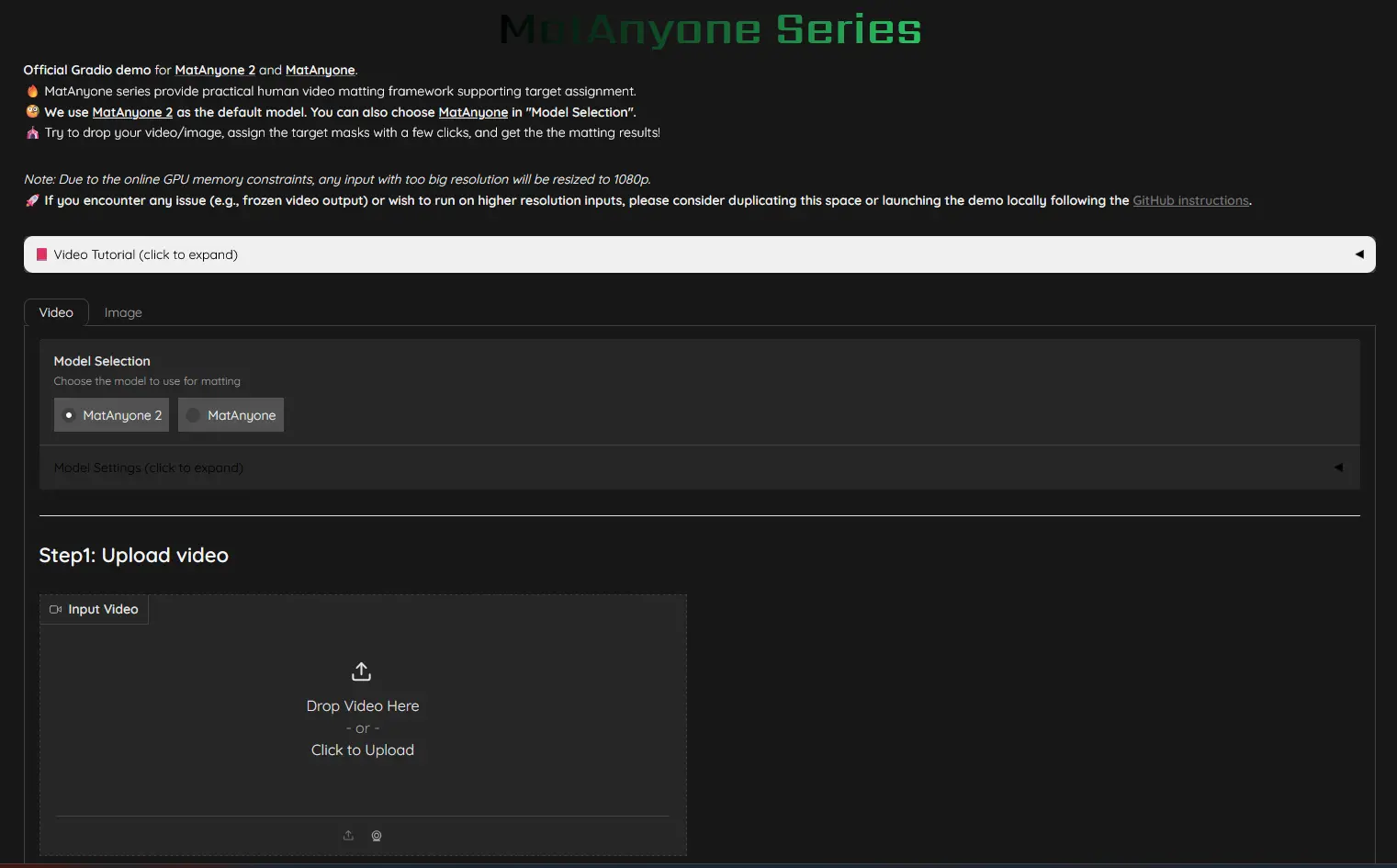

According to their official GitHub the full release is still in progress. Training codes, the quality evaluator checkpoint, and the VMReal dataset are all still coming. But the inference code is already live and MatAnyone 2 has an interactive demo live on HuggingFace right now.

Drop your video, click a few points to assign the target mask on the first frame and the model handles the rest. You can also run it locally if you prefer. The GitHub repo has clear setup instructions and the model checkpoint downloads automatically on first run.

Supports mp4, mov, and avi. Works on video folders too if you are processing individual frames.

One thing worth knowing, the demo runs MatAnyone 2 by default but you can switch to the original MatAnyone in the model selection if you want to compare the two directly. I’d recommend doing that on a clip with complex hair. The difference is obvious.

The part most skip

MatAnyone 2 is genuinely impressive for what it does. The quality evaluator idea is clever and the results on complex hair footage speak for themselves.

But two things worth knowing before you build anything around it.

First the license. This is NTU S-Lab License 1.0. Non-commercial use is completely free. If you need it for a commercial product you have to reach out to the team directly for permission, contact details are in the license file on GitHub.

Second it is not fully released yet. Training codes and the VMReal dataset are still coming. What you have right now is inference only. Good enough to test and experiment but not the full picture.

For creators who just want to remove backgrounds from personal projects or test what open source matting can do in 2026 it is absolutely worth trying.