Every serious coding agent including Claude Code, Cursor, Copilot, whatever you’re using shares the same problem. The agent writes code, the compiler throws an error, and the agent has to read text written for a human engineer to figure out what went wrong and how to fix it.

It’s one of the main reasons agentic coding loops break down. Error message formats change between compiler versions. The same underlying problem gets described differently depending on context. There’s no built-in concept of a repair action, just prose that an agent has to parse and hope it understood correctly.

Vercel Labs just released Zero, an experimental systems language built from day one around the idea that the compiler should talk to agents as clearly as it talks to humans. Its Apache 2.0 licensed, available now and genuinely interesting even at v0.1.1.

Table of Contents

The development loop AI agents keep breaking

The standard agentic coding loop looks roughly like this. Agent writes code. Compiler emits an error. Agent reads the error. Agent tries to fix it. Compiler emits another error. Repeat until it either works or the agent gives up and asks a human.

The fragile part is step three. Compiler errors are written for engineers who can read a stack trace, recognize a pattern from experience, and make a judgment call about what the fix probably is. Agents don’t have that. They’re parsing unstructured text, matching it against training data, and making an educated guess about what action to take.

When it works, it looks like magic. When it doesn’t, the agent spins, making changes that don’t address the actual problem, introducing new errors while fixing old ones, or confidently doing the wrong thing because the error message was ambiguous enough to support multiple interpretations.

Nobody built a language to fix this before Zero. The assumption has always been that you fix the agent, not the compiler.

Zero thinks compilers should talk to agents too

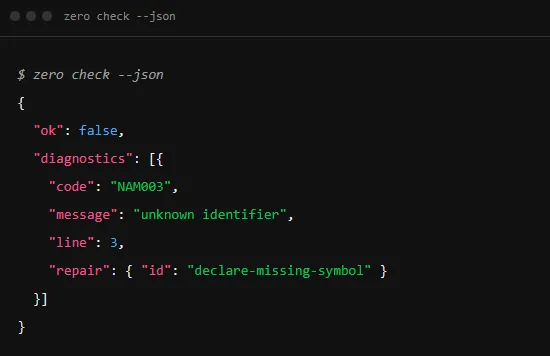

Zero’s answer starts with the diagnostic output. Run zero check –json and instead of a block of formatted text you get structured JSON, a stable error code, a human readable message, a line number, and a typed repair ID telling the agent exactly what category of fix is needed.

Humans read the message. Agents read the code and the repair object. The same command surfaces both without a separate mode or secondary tool.

That stable error code is more important here. NAM003 means unknown identifier in Zero today and it will mean the same thing in the next compiler version. An agent that learned to handle NAM003 doesn’t have to relearn it when the error message wording changes. The code is the contract.

The toolchain is unified into a single binary, zero check, zero build, zero run, zero fix, zero explain, and several others are all subcommands of the same CLI. For an agent operating in a loop this matters because there’s no reasoning required about which tool handles which task. One binary, predictable subcommands, consistent output format throughout.

The compiler doesn’t just fail, it explains how to repair things

This is where Zero goes further than just cleaning up diagnostic output.

Two subcommands are specifically designed for the agent repair loop. zero explain takes a diagnostic code and returns a structured explanation of what it means. An agent hits NAM003, calls zero explain NAM003, and gets back a precise description of the problem without scraping documentation that may be out of date or mismatched with the compiler version actually running.

zero fix goes one step further. Run zero fix –plan –json against a file and it emits a machine-readable fix plan, a structured description of exactly what changes to make to resolve the diagnostic. The agent doesn’t have to infer the fix from the error. The compiler tells it.

There’s also zero skills, which serves version-matched guidance directly through the CLI. Running zero skills get zero –full returns focused workflows covering Zero syntax, diagnostics, builds, packages, testing, and agent edit loops, all matched to the installed compiler version. This matters because agents working with Zero don’t need to scrape external documentation that may be out of sync with what’s actually installed. The guidance lives in the toolchain, not on a webpage somewhere.

The combined picture is a repair loop where the agent writes code, gets structured JSON when something breaks, looks up the error code directly, retrieves a machine-readable fix plan, applies it, and checks again. No human translation required at any step.

You May Like: Best AI Coding Models for Consumer Hardware (You Can Run Locally)

Why predictable software matters more when AI writes code

Zero’s capability-based I/O design isn’t just a language preference. It has a specific consequence for agentic workflows that’s worth understanding.

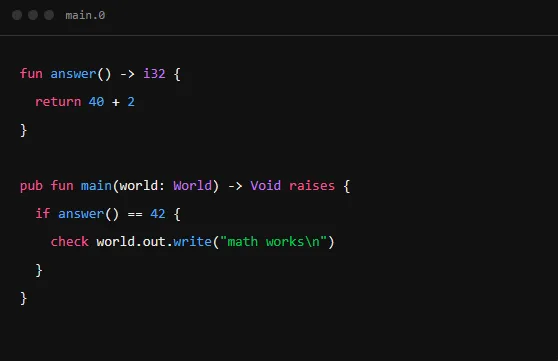

In Zero, if a function touches the outside world, writes to stdout, reads a file, makes a network call, its signature says so explicitly through a capability object called World. A function that doesn’t receive World or something derived from it cannot perform I/O. The compiler rejects it at compile time.

There are no hidden globals, no implicit async or no magic process objects. Every effect is visible in the code itself.

When a human writes code this way it’s good practice. When an agent writes code this way it’s something more useful, it’s auditable. You can read a Zero function signature and know immediately what it can and cannot do without tracing through the implementation. An agent reviewing its own output can verify effects without running anything. A human reviewing agent-generated code can spot unexpected capabilities at a glance.

The binary size story points in the same direction. Zero targets under 10 KiB native executables with no mandatory garbage collector, no hidden allocator, no mandatory event loop. For agents building small tools that need to run in constrained environments, that predictability has real value. zero size –json reports artifact size before code generation when possible, so the agent knows the cost before it commits.

Zero is still experimental

Zero is at v0.1.1. The compiler, standard library, and language spec are explicitly not stable. There’s no package registry yet. Cross-compilation support is limited to a documented subset of targets. The VS Code extension covers syntax highlighting and nothing else.

Vercel Labs is describing this as an experiment worth tracking, not a production dependency. That framing is honest and worth taking seriously. The ideas behind Zero — structured diagnostics, typed repair metadata, version-matched agent guidance, explicit capability declarations are genuinely new at the toolchain level. The execution is early.

For AI engineers thinking about how agentic coding workflows actually break down in practice, the repo is worth an afternoon. The Apache 2.0 license means you can build on it without restriction. The zero skills subcommand alone is an interesting design pattern for anyone building tools that agents will use.

For anyone considering replacing their current systems language with Zero in production, that’s not what this is yet. The language itself says so. Come back when the spec stabilizes.

The more interesting question Zero raises is whether structured agent-first compiler output becomes the expectation across the industry once developers see what it actually enables. That shift, if it happens, won’t start with Zero replacing Rust. It’ll start with enough people trying Zero that the next generation of toolchain designers builds this way by default.