Every few years something shows up in AI that makes people stop and argue. Not argue about which model is better or whose benchmark is more honest. Argue about whether the rules just changed. SubQ is that argument right now.

A Miami-based startup called Subquadratic came out of stealth last week with a single claim that’s either the most important architectural shift since the 2017 transformer paper or the most sophisticated AI hype in recent memory. They say they’ve built the first LLM that doesn’t rely on quadratic attention and that this lets them run a 12 million token context window at roughly one-fifth the cost of frontier models.

The AI research community split within hours. Half are losing their minds. Half are explaining why this doesn’t count. The truth is probably more interesting than either camp.

Here’s what we actually know.

What SubQ actually claims

The problem SubQ is trying to solve is real and has been real for years. Standard transformer attention compares every token against every other token in a sequence. That means doubling your input doesn’t double the cost, it quadruples it. The industry built an entire ecosystem of workarounds around this limitation. RAG systems, chunking strategies, retrieval pipelines, agentic orchestration most of the complexity in modern AI deployments exists because you can’t just put everything in the context window and let the model figure it out.

SubQ says they solved the underlying problem. Their architecture, Subquadratic Sparse Attention, or SSA, uses content-dependent selection. Instead of comparing every token against every other token, the model selects which positions actually matter for each query and computes attention only over those. Compute scales linearly with context length rather than quadratically. At 1 million tokens they’re claiming 52x faster than FlashAttention. At 12 million tokens attention compute drops by almost 1,000x versus standard transformers.

The production API ships with a 1 million token context window. The research model reaches 12 million. Both run at roughly one-fifth the cost of Opus or GPT-5.5 for comparable workloads.

Three products launched simultaneously in private beta, a full-context API, SubQ Code which is a CLI coding agent that loads entire codebases into a single context window, and SubQ Search which is a free long-context research tool. All three require access requests at subq.ai.

The team includes researchers from Meta, Google, Oxford, Cambridge, ByteDance, and Adobe. They raised $29 million in seed funding at a $500 million valuation from investors including the Tinder co-founder and early backers of Anthropic and OpenAI.

What 12 million tokens actually unlocks

Numbers this large are hard to reason about so here’s what 12 million tokens actually fits.

The entire Python 3.13 standard library is roughly 5.1 million tokens. Six months of pull requests against the React codebase is around 7.5 million tokens. A mid-sized company’s full codebase including tests and documentation fits comfortably. An entire year of customer support transcripts for a mid-market SaaS product. Every legal filing in a complex case. About 120 average-length books in a single prompt.

The practical implication if SubQ delivers is that RAG becomes optional for a much wider category of work. The entire premise of retrieval-augmented generation is that you can’t fit the relevant data into context so you build a search layer to pull in the pieces that matter. SubQ’s pitch is that for many of those workloads you skip that entire stack and just put the documents in.

That doesn’t eliminate RAG entirely. Real-time data, fast-changing information, and personalization still need retrieval. But static knowledge bases, internal codebases, long-running agent sessions, and document-heavy workflows, that’s a category of work where the economics and the architecture both change if the claims hold up.

SubQ Code is the most directly testable version of this claim. It positions itself as a long-context layer that sits underneath Claude Code, Codex, and Cursor handling the token-heavy context gathering while the primary agent handles reasoning. They claim 25% lower cost and 10x faster codebase exploration. That’s specific enough to verify quickly once people get access.

How SSA actually works

Most attempts to make attention cheaper have made the same tradeoff somewhere. Fixed-pattern sparse attention reduces compute by deciding in advance which positions a token can attend to, sliding windows, strided patterns, fixed masks. The problem is the routing decision happens before the model knows what it’s looking for. When the relevant information falls outside the pattern it simply doesn’t get seen.

State space models like Mamba take a different approach and remove the all-pairs comparison entirely. They achieve linear scaling but introduce a different constraint, the model compresses information into a fixed-size state as the sequence grows. It’s good at preserving gist and structure. It’s weaker at retrieving a specific fact introduced a million tokens ago because that fact may no longer exist in recoverable form.

Hybrid architectures try to get both by combining efficient layers with retained dense attention layers. It works in practice but the dense layers remain load-bearing. As context grows their quadratic cost dominates and you’re back where you started.

SSA is different in one specific way. The selection happens based on content not position. For each query the model decides which positions actually carry signal and computes exact attention only over those. Not approximated attention. Exact attention over a smaller set. The rest gets skipped entirely.

This gives it three properties that previous approaches couldn’t achieve together, linear scaling, content-dependent routing, and the ability to retrieve from arbitrary positions regardless of where they appear in the sequence. Prior approaches hit two of those three. SSA claims all three.

Whether that claim holds at frontier scale is the question the research community is still arguing about.

You May Like: OpenMythos: The Closest Thing to Claude Mythos You Can Run (And It’s Open Source)

The benchmark numbers and where they get complicated

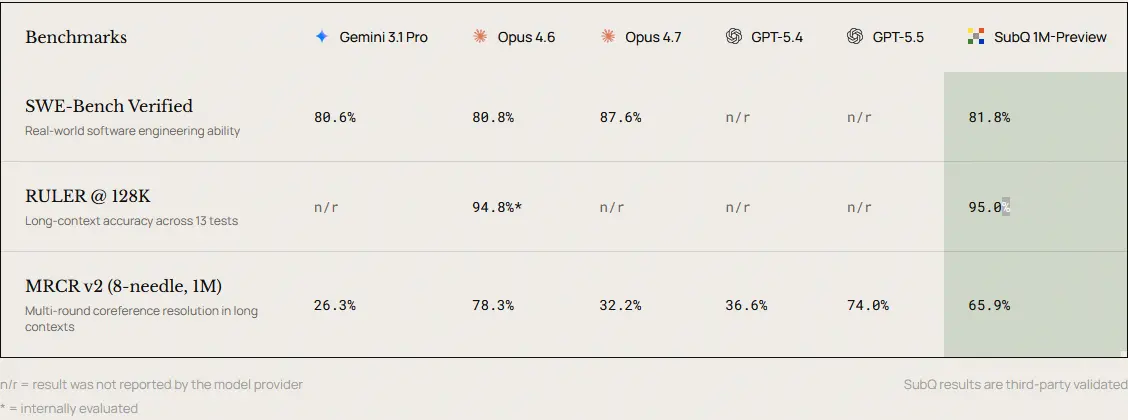

SubQ released three benchmark results at launch, all third-party verified according to the company.

RULER at 128K tests long-context retrieval across multi-hop tasks, aggregation, and variable tracking. SubQ scores 95.0% against Claude Opus 4.6’s 94.8%. That’s essentially parity worth noting but RULER at 128K is close to saturated across frontier models so matching Opus here is more about not regressing than winning.

MRCR v2 at 1 million tokens is the more interesting result. It tests whether a model can locate and integrate multiple non-adjacent pieces of evidence across a long context, closer to real work than simple needle-in-a-haystack retrieval. SubQ scores 65.9%. Gemini 3.1 Pro scores 26.3%. GPT-5.4 scores 36.6%.

Here’s where it gets complicated. The MRCR comparison Subquadratic uses for Claude Opus 4.6 is 32.2%, their press number. Their own technical post lists Opus 4.6 at 78.3% on the same benchmark. That’s a significant discrepancy and Subquadratic hasn’t explained it publicly. If the 78.3% number is accurate, SubQ loses Opus on MRCR rather than leading it.

SWE-Bench Verified scores 81.8% against Opus 4.6’s 80.8% and Gemini 3.1 Pro’s 80.6%. Competitive but Opus 4.7 sits at 87.6% on the same benchmark putting a ceiling on how impressive 81.8% reads.

The compute efficiency part is the strongest part of the launch. The accuracy benchmarks are competitive without being dominant, and the MRCR discrepancy is the number worth watching when the full technical report drops.

Why researchers are not easily convinced

The doubt isn’t personal. It’s historical.

Subquadratic attention is one of the most heavily explored areas in machine learning. Mamba, RWKV, Hyena, RetNet, linear attention, DeepSeek Sparse Attention, each one demonstrated linear scaling on benchmarks. Each one ran into the same wall. Pure subquadratic architectures match dense attention at small and medium scale then visibly underperform at frontier scale, or end up in hybrid configurations that bring the quadratic cost back in through the dense layers.

The pattern is consistent enough that serious researchers are skeptical by default when a new subquadratic claim appears, regardless of who’s making it.

Two specific concerns surfaced fast after the SubQ launch. AI engineer Will Depue posted publicly that SubQ is almost certainly a sparse attention finetune of Kimi or DeepSeek, meaning the strong base model performance comes from existing open-source weights and SSA is the layer added on top rather than a ground-up architecture. Subquadratic has neither confirmed nor denied this and hasn’t released weights or a full technical report.

The second concern is about the MRCR discrepancy mentioned above. Using the lower Opus number for comparison while the higher number exists in your own technical documentation is the kind of thing that makes researchers distrust everything else in the launch.

None of this proves SubQ doesn’t work. It means the burden of proof is high and the evidence so far is incomplete.

What to actually watch for

The technical report is the first thing that matters. Subquadratic says it’s coming soon. When it drops, the architecture details will either hold up to scrutiny or reveal where the quadratic cost is actually hiding. The research community will find it fast if it’s there.

Independent benchmarks from labs that Subquadratic doesn’t pay are the second thing. The current results are third-party verified according to the company but third-party verification and independent replication are different things. When other labs run SubQ on their own evaluation setups the numbers will either confirm or diverge from what’s been published.

The weights question matters too. If Subquadratic releases model weights the community can directly test whether SSA is genuinely subquadratic or whether the dense layers are doing more work than the architecture diagrams suggest.

SubQ Code is the most immediately testable product for developers. Load a real codebase you understand well and see whether the 25% cost reduction and 10x exploration speed claims hold. That’s the kind of test that doesn’t require a technical report.

The Claim Is Big. The Evidence Isn’t There Yet.

SubQ is the most architecturally interesting LLM launch since DeepSeek V3. That’s true whether or not the 1,000x number survives contact with independent reviewers.

A linear-scaling attention mechanism that holds up on RULER, stays competitive on MRCR, and matches frontier models on SWE-Bench while running at one-fifth the cost is genuinely new if it’s what it claims to be. The team is credible. The funding is real. The problem they’re solving is real.

The honest version is that the marketing is ahead of the evidence right now. The MRCR discrepancy needs explaining. The weights need releasing. The technical report needs publishing. Until those three things happen, SubQ earns a careful watch and an early access request if you run long-context workloads.

The next few weeks will decide which direction this goes. Either the evidence confirms the headline numbers and SubQ permanently changes how long-context AI gets built, or the gap between what was claimed and what independent testing finds becomes the new headline.