Your image generator has never seen today. It was trained months ago, maybe longer, and everything it draws comes from that frozen snapshot of the world. Ask it to generate a current news moment, a product that launched last month, or anything that requires knowing what’s happening right now and it fills in the gaps with a confident guess. Sometimes that guess is close. Often it isn’t.

Gen-Searcher does something most of the mainstream tools don’t do. Before it draws a single pixel, it goes and looks things up. It searches the web. It browses sources. It pulls visual references. Then it generates. The result is an image grounded in actual current information.

It’s open source, the weights are on Hugging Face, and the team released everything including code, training data, benchmark, the lot.

Table of Contents

The idea behind Gen-Searcher

Most attempts to improve image generation focus on the generation itself with better architecture, more parameters, cleaner training data. Gen-Searcher goes one step back and asks a different question, what if the model knew what it was drawing before it drew it?

The answer is an agent that behaves less like an image generator and more like a researcher who happens to draw. You give it a prompt. Instead of immediately producing an image, it searches the web, reads sources, pulls visual references, cross-checks what it finds and only then generates.

Think of it as the difference between asking someone to draw a place they’ve never visited versus someone who just spent ten minutes looking it up. Same drawing ability. Completely different output.

That’s the whole idea. Not a bigger model. Not a newer training cutoff. Just a model that looks things up first.

How it actually works

Gen-Searcher is built on Qwen3-VL-8B, a multimodal model that can read both text and images. The team trained it in two stages.

First, supervised fine-tuning on 10,000 carefully selected examples teaching the model what good research before generation looks like. What sources to trust, how to browse evidence, when it has enough information to actually draw.

Then reinforcement learning on 6,000 examples, this is where it gets interesting. Instead of just imitating correct behavior, the model learns from outcomes. Did the generated image actually match reality? Was the research thorough enough? RL pushes it to get better at the parts that matter.

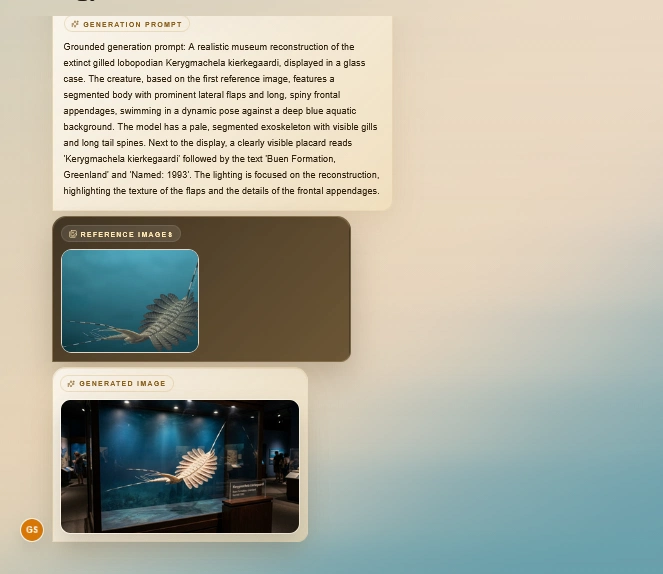

The result is a model that runs a full agentic loop every time you give it a prompt. It breaks the task down, searches the web, browses pages for evidence, looks for visual references, reasons across everything it found, and then generates. More like how a person actually researches something before committing to one.

To test all this properly the team built KnowGen, a benchmark covering around 20 real-world categories specifically designed to test whether generated images are actually accurate. A lot of image generation benchmarks reward aesthetic quality. KnowGen rewards getting things right.

Bring Your Own Image Generator

Gen-Searcher is a framework as much as it is a model. The 8B model is the research agent. The image generator is a separate layer sitting underneath it. The team used Qwen-Image-Edit as their default, but they built it to be swapped out.

Their own benchmarks prove this. They tested the same Gen-Searcher-8B agent with three completely different image generators like Qwen-Image, Seedream 4.5, and Nano Banana Pro and all three improved. The agent doesn’t care what’s generating the final image. It just hands over better, more grounded instructions than a raw prompt would.

So if you already have a preferred image generator, you’re not locked out. You can bring Gen-Searcher in as the research layer and keep generating with whatever backend you’re already using. The swap requires editing one API file in the repo.

Related: AI Image Generators You Can Run on Consumer GPUs

Not All Generators Benefit Equally

| Models | Science (Visual Correlation) | Science (Text accuracy) | Science (Faithfulness) | Pop Culture (Visual corr.) | Pop Culture (Text acc.) | Overall K-Score |

|---|---|---|---|---|---|---|

| GPT-Image-1 (Reference) | 20.92 | 27.89 | 72.79 | 19.43 | 31.98 | 34.19 |

| Qwen-Image (Alone) | 6.80 | 0.34 | 47.45 | 7.59 | 1.40 | 14.98 |

| Gen-Searcher-8B + Qwen-Image | 26.87 | 17.18 | 65.14 | 25.30 | 23.55 | 31.52 |

| Seedream 4.5 (Alone) | 14.46 | 26.19 | 64.46 | 12.50 | 31.77 | 31.01 |

| Gen-Searcher-8B + Seedream 4.5 | 36.35 | 43.52 | 75.77 | 39.04 | 45.86 | 47.29 |

| Nano Banana Pro (Alone) | 39.46 | 49.32 | 86.22 | 30.51 | 53.37 | 50.38 |

| Gen-Searcher-8B + Nano Banana Pro | 45.07 | 49.32 | 86.56 | 43.01 | 52.30 | 53.30 |

Slotting Gen-Searcher in front of different image generators doesn’t produce the same jump across the board and that’s actually useful information if you’re deciding whether to bother.

Seedream 4.5 went from an overall K-Score of 31 to 47.29 with Gen-Searcher in front of it. For context, that puts it ahead of GPT-Image-1’s score of 34.19 on the same benchmark, a closed, commercial model that most people can’t run locally.

Nano Banana Pro moved from 50.38 to 53.30. Smaller jump, but it was already the strongest baseline in the test. There’s less room to grow when you start that high.

Qwen-Image went from 14.98 to 31.52 more than doubling its score. If you’re already running Qwen-Image locally, adding Gen-Searcher on top is probably the highest-value thing you can do to it.

The pattern across all three is the same: accuracy on knowledge-heavy prompts goes up significantly. Aesthetics stay roughly flat. Gen-Searcher makes your generator smarter, not prettier. Worth knowing before you expect it to fix everything.

How to Try It

The easiest entry point is the model weights directly on Hugging Face. Gen-Searcher-8B is there along with the SFT variant, the training datasets, and the KnowGen benchmark — all in one place. The model weights are Apache 2.0 licensed, so you can use them freely.

If you want to go deeper, the full pipeline is on GitHub — training code, inference scripts, the agentic workflow, everything. You’ll need to set up a Serper API key for web search, a Jina API key for browsing, and have a compatible image generator running as a separate service. Their default is Qwen-Image-Edit served via FastAPI, but as covered earlier, that part is yours to swap.

For inference alone without any training, the hardware requirement drops significantly compared to the training setup. Still not lightweight, but far more approachable.

Start with the Hugging Face page, read the GitHub README before touching anything else, and set your expectations right, this takes some setup. It’s worth it if knowledge accurate image generation is something you actually need.

The Agent That Searches Before It Draws

Most improvements in image generation are about making the output look better. Gen-Searcher is about making it know more.

That’s a different problem to solve and honestly a more interesting one. The gap between a generated image that looks right and one that actually is right, that’s what this project is trying to close. And based on what the benchmarks show, it closes it meaningfully.

It won’t replace your current setup overnight. The hardware ask is real, the search tool dependency, and the performance gap from using public APIs instead of their original tools is something you’ll notice. None of that is hidden, the team is upfront about all of it.

For anyone building pipelines that need images grounded in current real-world information, this is probably the most useful open release in that specific space right now.